Cloudflare's cache now runs on a new proxy built on Pingora ↗, the Rust-based framework that already serves a significant portion of Cloudflare's network traffic. The new proxy is faster, more memory-safe, and designed to evolve our cache architecture. It delivers immediate performance improvements and enables new caching capabilities.

- Lower latency: The new proxy reduces per-request overhead through improved connection reuse.

- Reduced cache MISSes: Enhanced cache retention improves origin offload.

- Better RFC compliance: Caching behavior more closely follows HTTP caching standards.

- Foundation for future features: The new architecture enables upcoming improvements to cache functionality and efficiency.

- Asynchronous

stale-while-revalidate: Every request returns stale content immediately while revalidation happens in the background, instead of the first request after expiry blocking on the origin. Refer to the asynchronousstale-while-revalidatechangelog for details. - Unbuffered bypass by default: Responses that bypass cache are streamed directly to the client without buffering, reducing time-to-first-byte for uncacheable content.

The new architecture introduces the following behavioral changes to improve RFC compliance and correctness:

Vary: *results in cache bypass: According to RFC 9110 Section 12.5.5 ↗, aVaryheader value of*indicates the response varies on factors beyond request headers and must not be served from cache. Cloudflare now bypasses cache for these responses instead of storing them.Set-Cookiestripped on MISS and EXPIRED: For cacheable assets,Set-Cookieis now stripped on MISS and EXPIRED responses, not only on HITs.- Floating-point TTL values: Floating-point time-to-live values (for example,

max-age=1.5) are rounded down to the nearest integer instead of being rejected as invalid.

A deeper look at the new cache proxy is coming soon to the Cloudflare blog ↗. For background on the underlying framework, read:

You can now navigate, switch context, and take common actions in the Cloudflare dashboard without leaving your keyboard. Press

?anywhere to see the full list. Keyboard shortcuts can be disabled by visiting your profile settings ↗.Shortcut Action g hGo to Home g aGo to account overview g zGo to zone overview g pGo to your profile g wGo to Workers & Pages g oGo to Zero Trust g bGo to billing g 1–g 5Go to a recent or pinned item (by position in sidebar) t →Move to the next tab t ←Move to the previous tab p →Move to the next page of a table p ←Move to the previous page of a table Shortcut Action /Open quick search ?Show keyboard shortcuts s aSwitch account s zSwitch zone s .Star or unstar the current zone p .Pin or unpin the current page t sToggle the sidebar open or closed t mExpand or collapse all sidebar menus t aToggle Ask AI sidebar d .Toggle dark mode c uCopy the current URL c dCopy a deep link URL

Cloudflare Pipelines ingests streaming data via Workers or HTTP endpoints, transforms it with SQL, and writes it to R2 as Apache Iceberg tables. R2 Data Catalog manages those Iceberg tables, compaction, and compatibility with query engines like R2 SQL, Spark, and DuckDB.

You can now create and manage both products using Terraform, supported in the Cloudflare Terraform provider v5.19.0 ↗.

This adds four new resources that let you define your entire data pipeline as infrastructure-as-code: a data catalog, a stream for ingestion, a sink that writes to R2 Data Catalog or R2, and a pipeline that connects them with SQL.

The new Terraform resources are:

cloudflare_r2_data_catalog↗ — enable the data catalog on an R2 bucketcloudflare_pipeline_stream↗ — create a stream that receives events via HTTP or Worker bindingscloudflare_pipeline_sink↗ — create a sink that writes to R2 Data Catalog or R2cloudflare_pipeline↗ — create a pipeline with SQL connecting a stream to a sink

Here is a minimal example that creates a stream, an R2 Data Catalog sink, and a pipeline:

resource "cloudflare_pipeline_stream" "my_stream" {account_id = var.cloudflare_account_idname = "my_stream"format = { type = "json" }schema = {fields = [{name = "value"type = "json"required = true}]}http = { enabled = true, authentication = false, cors = {} }worker_binding = { enabled = false }}resource "cloudflare_pipeline_sink" "my_sink" {account_id = var.cloudflare_account_idname = "my_sink"type = "r2_data_catalog"format = { type = "parquet" }schema = { fields = [] }config = {account_id = var.cloudflare_account_idbucket = "my-pipeline-bucket"table_name = "my_table"token = var.catalog_token}}resource "cloudflare_pipeline" "my_pipeline" {account_id = var.cloudflare_account_idname = "my_pipeline"sql = "INSERT INTO ${cloudflare_pipeline_sink.my_sink.name} SELECT * FROM ${cloudflare_pipeline_stream.my_stream.name}"}For a full end-to-end example that includes R2 bucket creation, data catalog setup, and scoped API token provisioning, refer to the Pipelines Terraform documentation.

You can now use

@cloudflare/dynamic-workflows↗ to run a Workflow inside a Dynamic Worker, ensuring durable execution for code that is loaded at runtime.The Worker Loader loads Dynamic Workers on demand, which previously made durability challenging. Even within a Dynamic Worker, a Workflow might sleep for hours or days between steps, and by the time it resumes, the original Dynamic Worker code would no longer be in memory.

The library solves this by tagging each Workflow instance with metadata that identifies which Dynamic Worker to load — for example, a tenant ID — then reloading the matching Dynamic Worker through the Worker Loader whenever a Workflow awakens.

Because Dynamic Workers are created on-demand, you do not have to register each Workflow up front or manage them individually. Load the Workflow code in the Dynamic Worker when it is needed, and the Workflows engine handles persistence and retries behind the scenes. Your Workflow code itself is unaffected by the routing and behaves as normal.

This unlocks patterns where the Workflow code itself is dynamic. For example, this is useful with:

- SaaS platforms where each tenant defines their own automation, such as onboarding sequences, approval chains, or billing retry logic.

- AI agent frameworks where agents generate and execute multi-step plans at runtime, surviving restarts and waiting for human approval between tool calls.

- Multi-tenant job systems where each customer submits their own processing logic and every step persists progress and retries on failure.

TypeScript import {createDynamicWorkflowEntrypoint,DynamicWorkflowBinding,wrapWorkflowBinding,type WorkflowRunner,} from "@cloudflare/dynamic-workflows";export { DynamicWorkflowBinding };interface Env {WORKFLOWS: Workflow;LOADER: WorkerLoader;}function loadTenant(env: Env, tenantId: string) {return env.LOADER.get(tenantId, async () => ({compatibilityDate: "2026-01-01",mainModule: "index.js",modules: { "index.js": await fetchTenantCode(tenantId) },// The Dynamic Worker uses this exactly like a real Workflow binding;// every create() is tagged with { tenantId } automatically.env: { WORKFLOWS: wrapWorkflowBinding({ tenantId }) },}));}// The entrypoint name must match `class_name` in the workflows binding of your Wrangler config file.export const DynamicWorkflow = createDynamicWorkflowEntrypoint<Env>(async ({ env, metadata }) => {const stub = loadTenant(env, metadata.tenantId as string);return stub.getEntrypoint("TenantWorkflow") as unknown as WorkflowRunner;},);export default {fetch(request: Request, env: Env) {const tenantId = request.headers.get("x-tenant-id")!;return loadTenant(env, tenantId).getEntrypoint().fetch(request);},};For a full walkthrough, refer to the Dynamic Workflows guide.

Cloudflare IPsec now supports post-quantum key agreement with compatible third-party devices. Cisco ↗ and Fortinet ↗ are the first third-party vendors validated to interoperate with Cloudflare IPsec using ML-KEM (Module-Lattice-Based Key-Encapsulation Mechanism).

Post-quantum IPsec uses RFC 9370 ↗ and draft-ietf-ipsecme-ikev2-mlkem ↗ to negotiate hybrid key agreement during the IKEv2

IKE_INTERMEDIATEphase. This combines classical Diffie-Hellman (Group 20) with ML-KEM-768 or ML-KEM-1024 to protect against harvest-now, decrypt-later ↗ attacks.Key details:

- Compatible with Cisco 8000 Series Secure Routers with IOS XR Release 26.1.1 and Fortinet FortiOS 7.6.6 and later.

- Uses ML-KEM-768 or ML-KEM-1024 as an additional Key Exchange to DH Group 20.

- Follows RFC 9370 and draft-ietf-ipsecme-ikev2-mlkem standards.

- No additional licensing required.

Post-quantum IPsec with third-party devices is now generally available with confirmed interoperability for the platforms listed above. Cloudflare intends to support interoperability with more vendors as they build out support for draft-ietf-ipsecme-ikev2-mlkem. Contact your account team to discuss support for additional vendors.

For supported key exchange methods and the list of validated platforms, refer to GRE and IPsec tunnels.

Cloudflare DLP now includes Data Classification, which lets administrators organize and label sensitive content using labels, templates, and reusable data classes.

With Data Classification, administrators can define labels such as sensitivity schemas and levels, and data tag groups and tags. Administrators can also build from Cloudflare-managed templates and create reusable data classes that combine detection entries, other data classes, sensitivity levels, and data tags.

You can then use those classifications in custom DLP profiles to identify the severity of sensitive content, understand where it exists, and apply that logic consistently across DLP profiles.

For more information, refer to Data Classification.

Cloudflare DLP now includes new predefined detection entries.

The expanded catalog includes detections for specific credential types, webhooks, addresses, tax identifiers, national IDs, financial data, and crypto wallets.

Examples include

GitHub PAT,OpenAI API Key,Slack Webhook,Discord Webhook,US Physical Address, andBitcoin Wallet.For the full list, refer to Predefined detection entries.

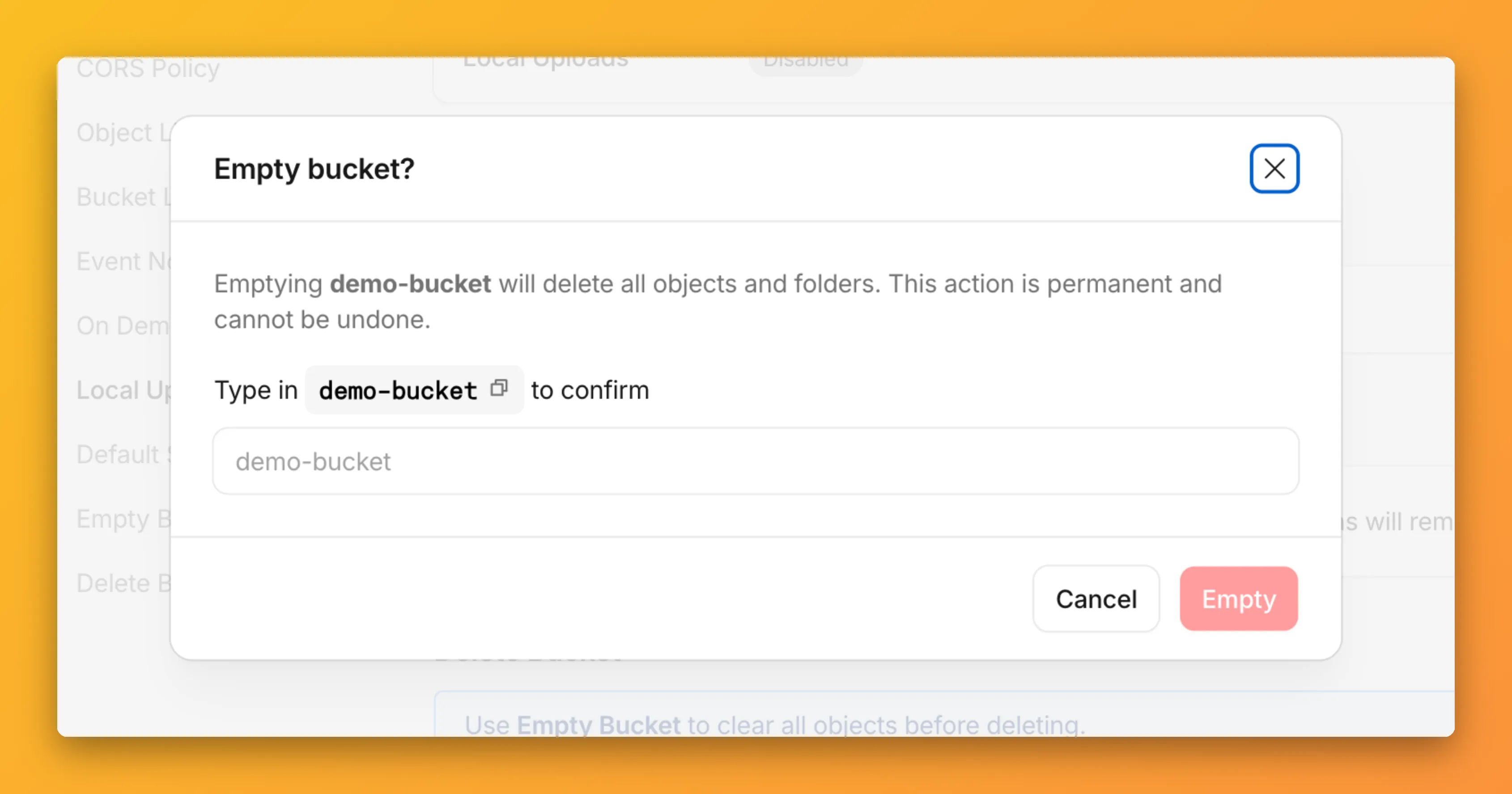

You can now empty an entire R2 bucket or delete folders directly from the dashboard. Emptying a bucket is required before you can delete it. Previously, this required scripting or configuring lifecycle rules. Now, the dashboard can handle it in a single action.

Go to your bucket's Settings tab and select Empty under the Empty Bucket section. This deletes all objects in the bucket while preserving the bucket and its configuration. For large buckets, the operation runs in the background and the dashboard displays progress.

Emptying a bucket is also a prerequisite for deleting it. The dashboard now guides you through both steps in one place.

R2 uses a flat object structure. The dashboard groups objects that share a common prefix into folders when the View prefixes as directories checkbox is selected. Deleting a folder removes every object under that prefix.

From the Objects tab, you can select one or more folders and delete them alongside individual objects.

For step-by-step instructions, refer to Delete buckets and Delete objects.

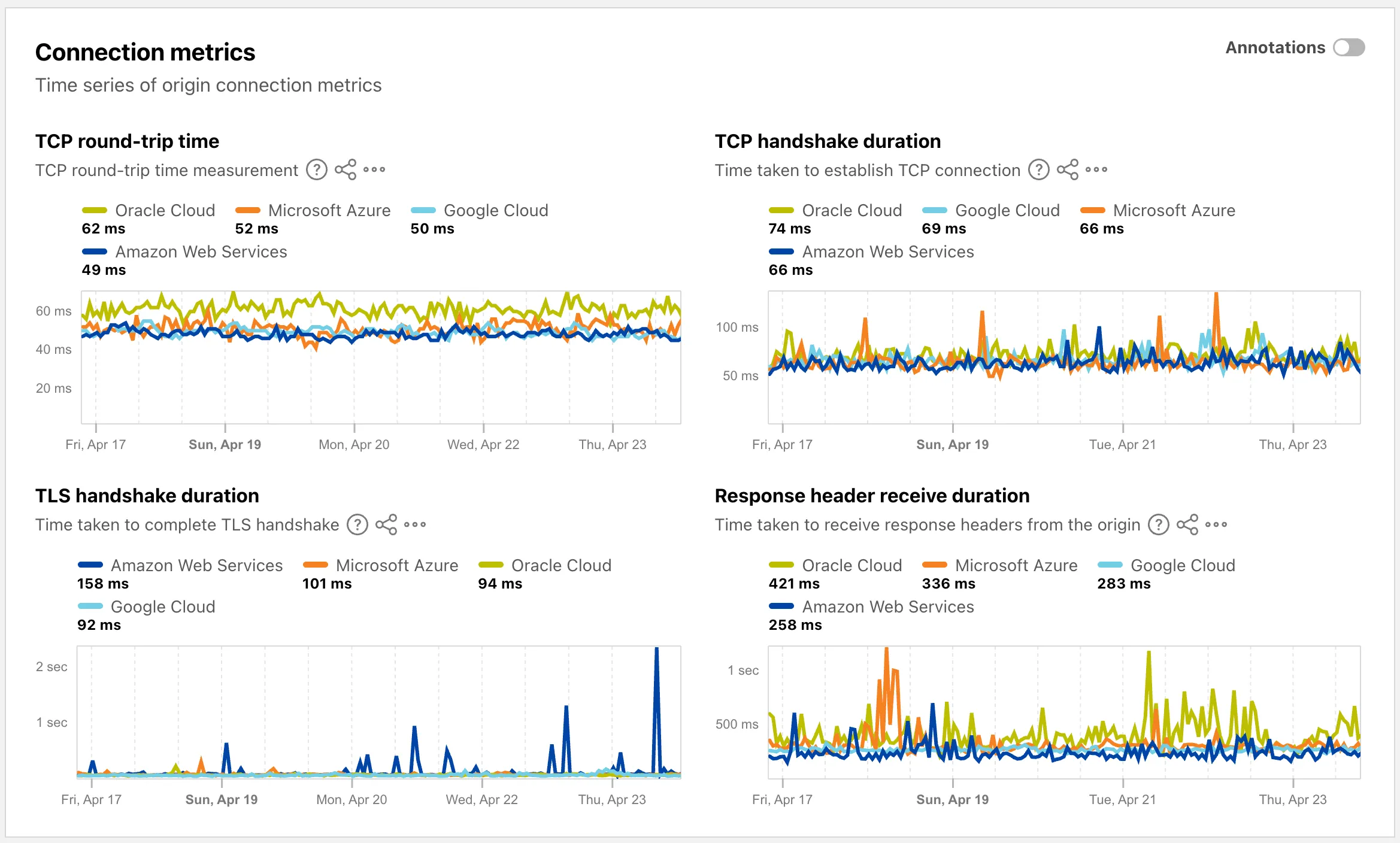

The Cloud Observatory ↗ on Radar now provides improved connection metric insights, offering new ways to explore TCP round-trip time, TCP handshake duration, TLS handshake duration, and response header receive duration across cloud provider origin servers.

The Cloud Observatory overview ↗ now shows connection metrics broken down by cloud provider, making it easy to compare connection performance across Amazon Web Services, Google Cloud, Microsoft Azure, and Oracle Cloud.

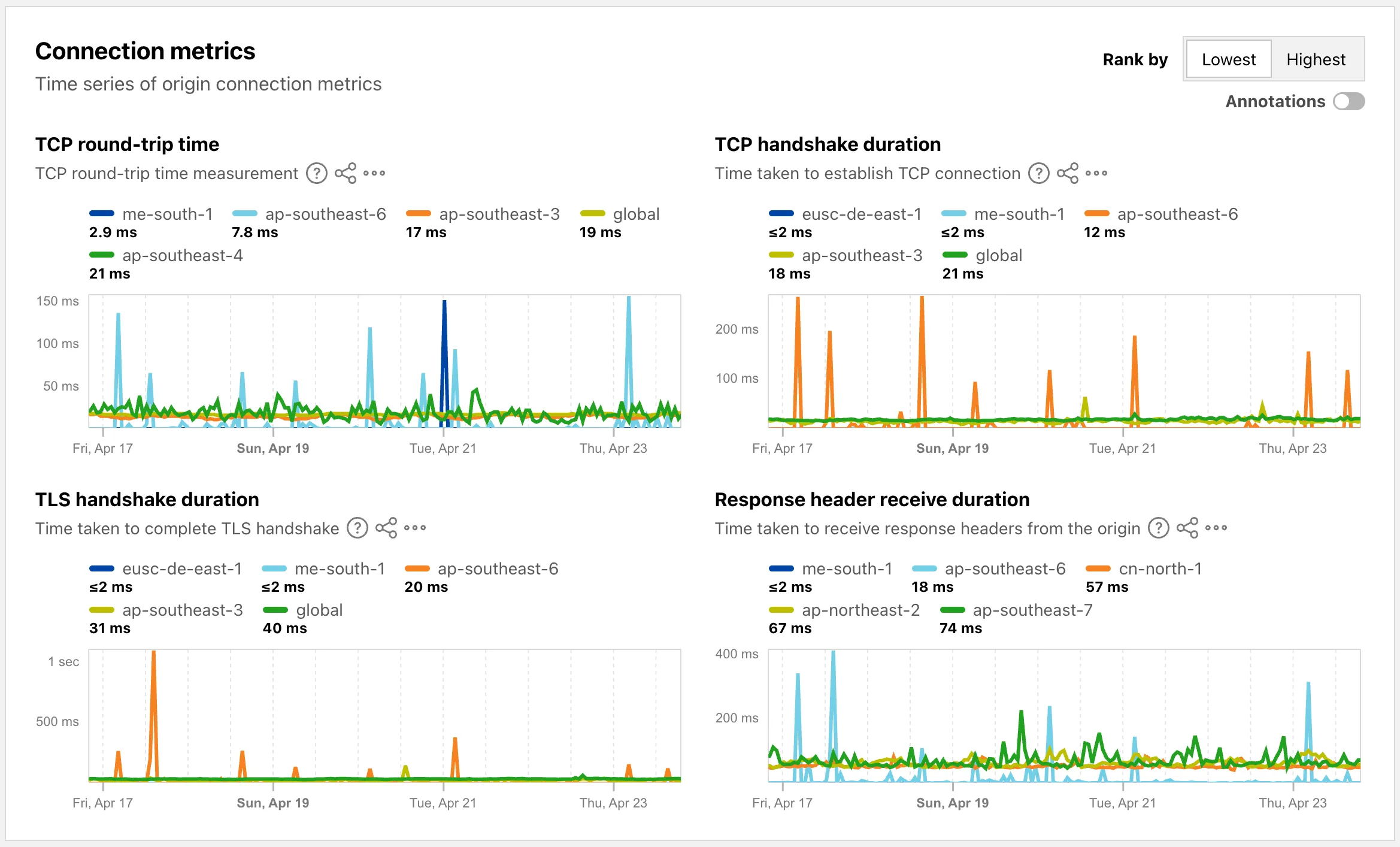

Each provider page ↗ now shows connection metrics for the top five regions, with a selector to rank by lowest or highest values.

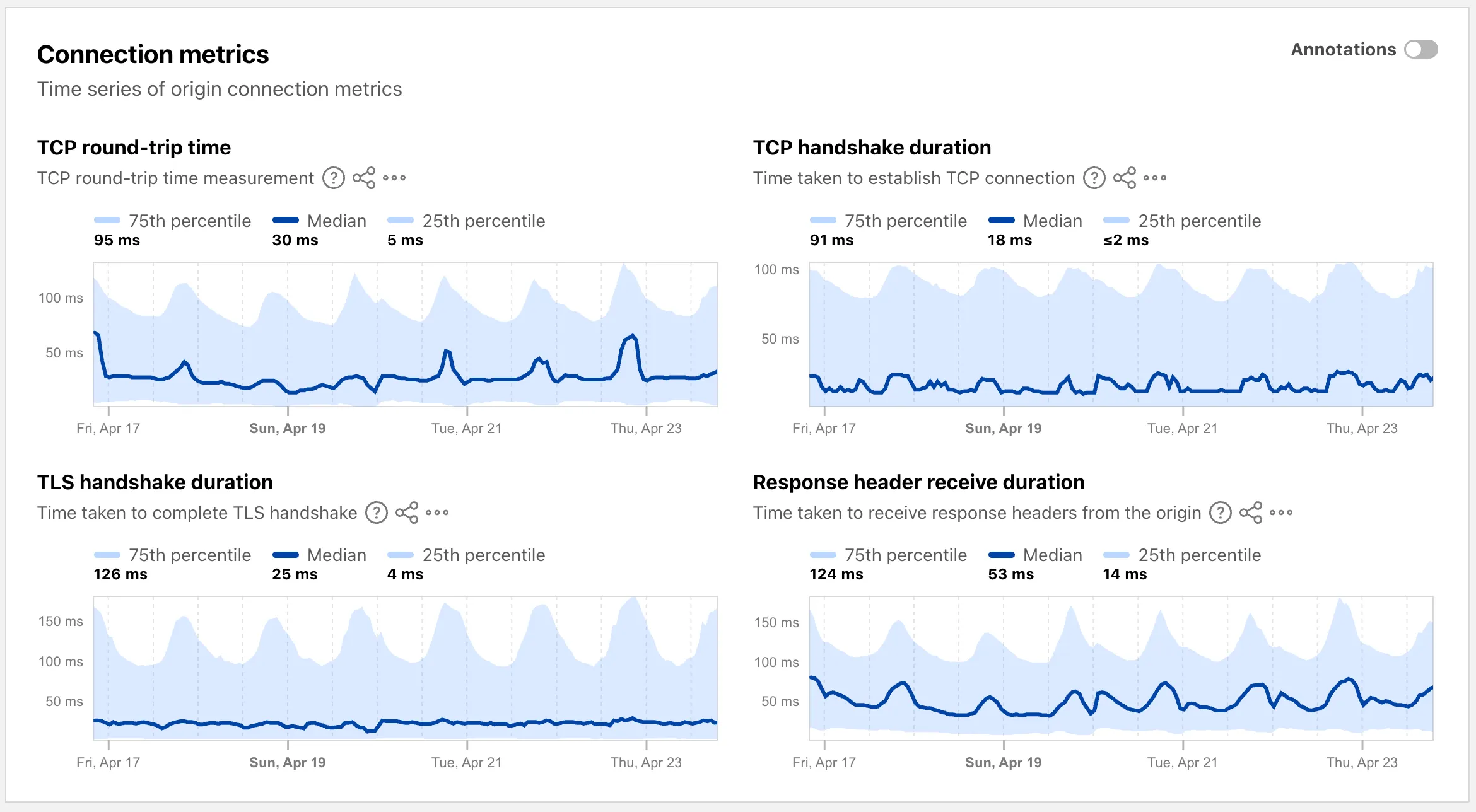

Each region page ↗ now displays connection metrics as percentile distributions (25th percentile, median, and 75th percentile), providing insight into the range and variability of connection times.

These views are also available through the

OriginsAPI, using thetimeseries_groupsendpoint with theORIGIN,REGION, orPERCENTILEdimension.

Radar now supports dark mode. A theme selector in the upper right corner of the page lets users explicitly choose between three display options:

- Light — standard light theme

- Dark — full dark theme

- System — follows the operating system preference

The selected theme applies consistently across all Radar pages and widgets.

The theme choice also applies to shared and embedded graphs.

Try it out at Cloudflare Radar ↗.

Shared dictionaries (RFC 9842 ↗) let an origin compress a response against a previous version of the same resource that the browser already has cached, so only the difference between versions travels over the wire. Shared dictionaries passthrough is now in open beta on all plans.

In passthrough mode, Cloudflare:

- Forwards the

Use-As-DictionaryandAvailable-Dictionaryheaders between client and origin without modification. - Treats

dcb(Dictionary-Compressed Brotli) anddcz(Dictionary-Compressed Zstandard) as validContent-Encodingvalues end to end, without recompressing them. - Extends the cache key to vary on

Available-DictionaryandAccept-Encodingso each delta-compressed variant is cached correctly.

Your origin manages the dictionary lifecycle: deciding which assets are dictionaries, attaching

Use-As-Dictionaryheaders, and producing deltas in response toAvailable-Dictionaryrequests. Cloudflare handles the transport and the cache.In internal testing on a 272 KB JavaScript bundle, the asset shrinks from 92.1 KB with Gzip to 2.6 KB with delta Zstandard against the previous version — a 97% reduction over standard compression — with download times improving by 81–89% versus Gzip.

Shared dictionaries work with browsers that advertise

dcbordczinAccept-Encoding. Today, this includes Chrome 130 or later and Edge 130 or later.Turn on passthrough for your zone with a single API call:

Terminal window curl "https://api.cloudflare.com/client/v4/zones/$ZONE_ID/settings/shared_dictionary_mode" \--request PATCH \--header "Authorization: Bearer $CLOUDFLARE_API_TOKEN" \--json '{"value": "passthrough"}'You can also turn it on under Speed > Settings > Content Optimization in the Cloudflare dashboard ↗. For full origin setup instructions and a working test recipe, refer to Shared dictionaries, or try the live demo at canicompress.com ↗.

- Forwards the

This emergency release introduces a new rule to block a cPanel & WHM Authentication Bypass related to CVE-2026-41940.

Key Findings

- CVE-2026-41940: A critical authentication bypass vulnerability in cPanel & WHM allows unauthenticated remote attackers to bypass authentication mechanisms and gain unauthorized administrative access to the web hosting control panel. This vulnerability affects the session validation logic, enabling attackers to craft malicious requests that circumvent normal authentication checks.

Impact

Successful exploitation allows unauthenticated attackers to gain administrative control over affected cPanel & WHM installations. This leads to complete server compromise, potential theft or manipulation of hosted data, and significant service disruption across managed environments.

We strongly recommend applying official vendor patches for cPanel & WHM immediately to address the underlying vulnerability.

Ruleset Rule ID Legacy Rule ID Description Previous Action New Action Comments Cloudflare Managed Ruleset N/A cPanel - Auth Bypass - CVE:CVE-2026-41940 N/A Block This is a new detection.

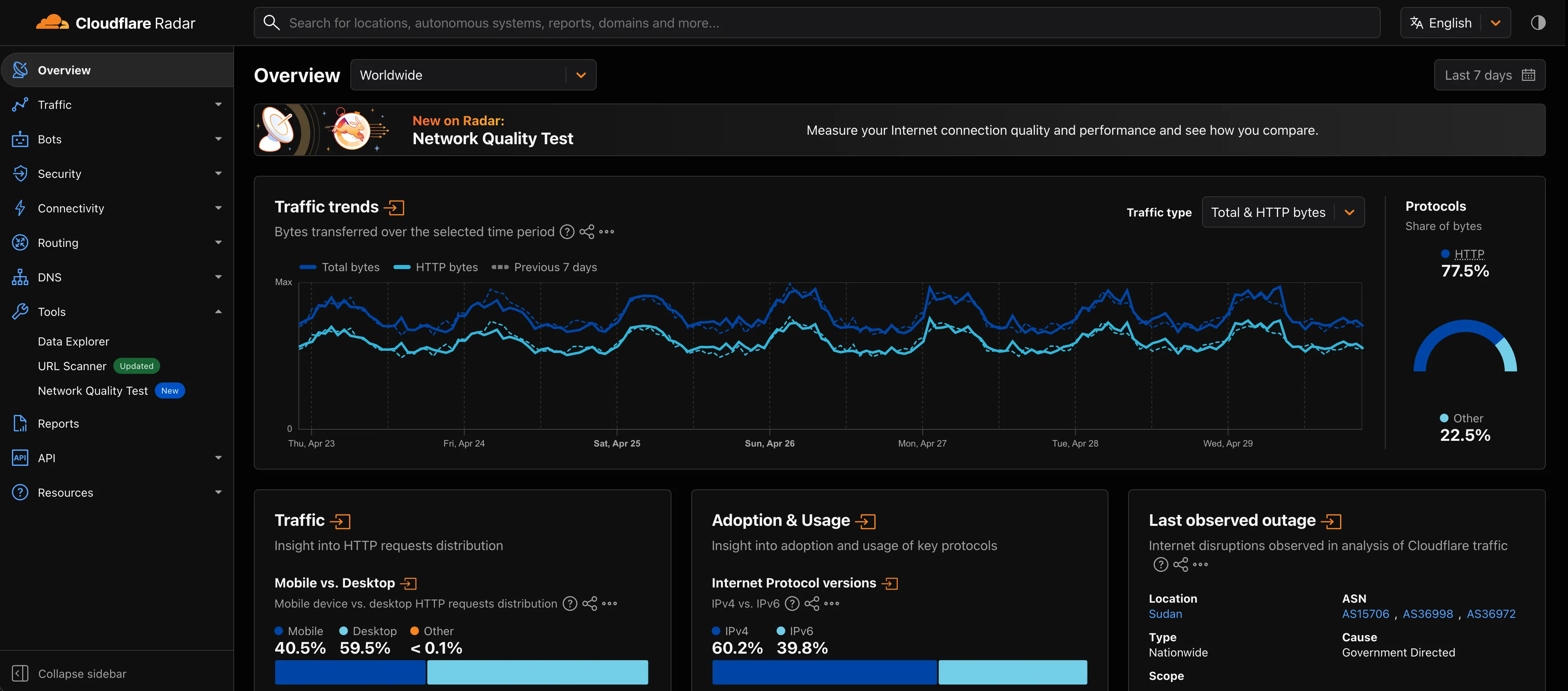

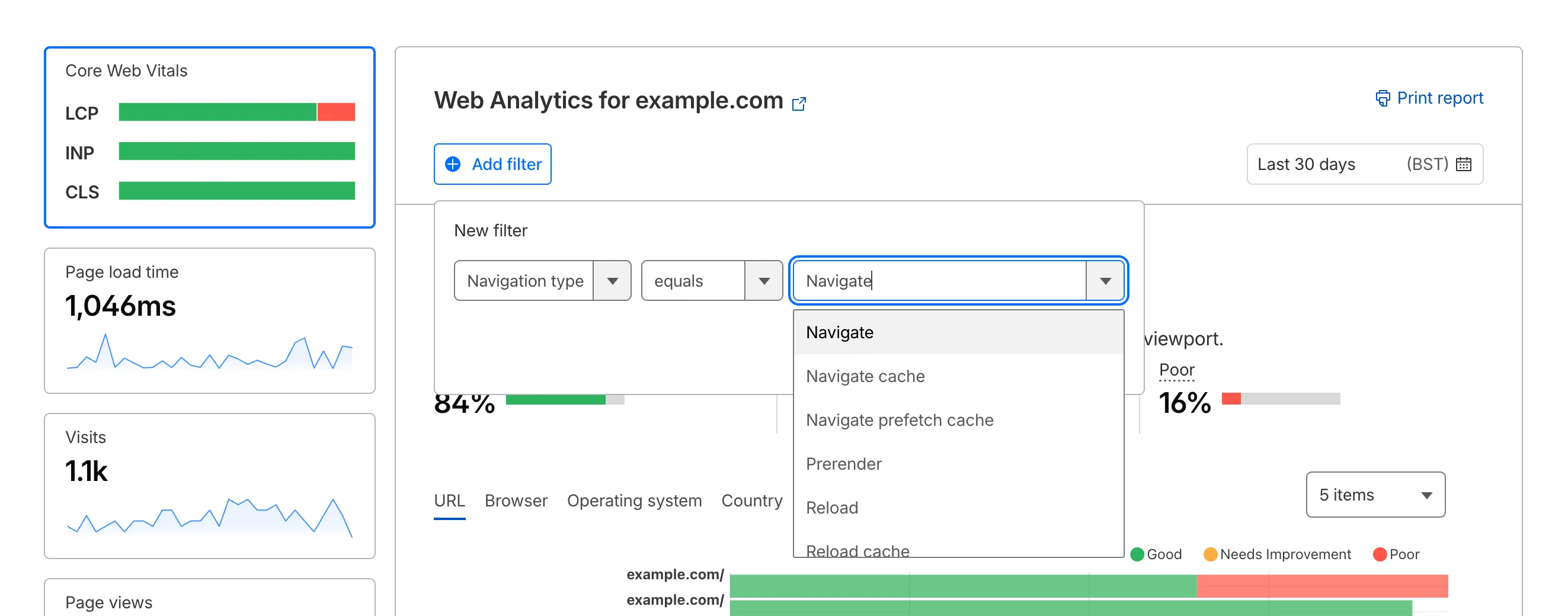

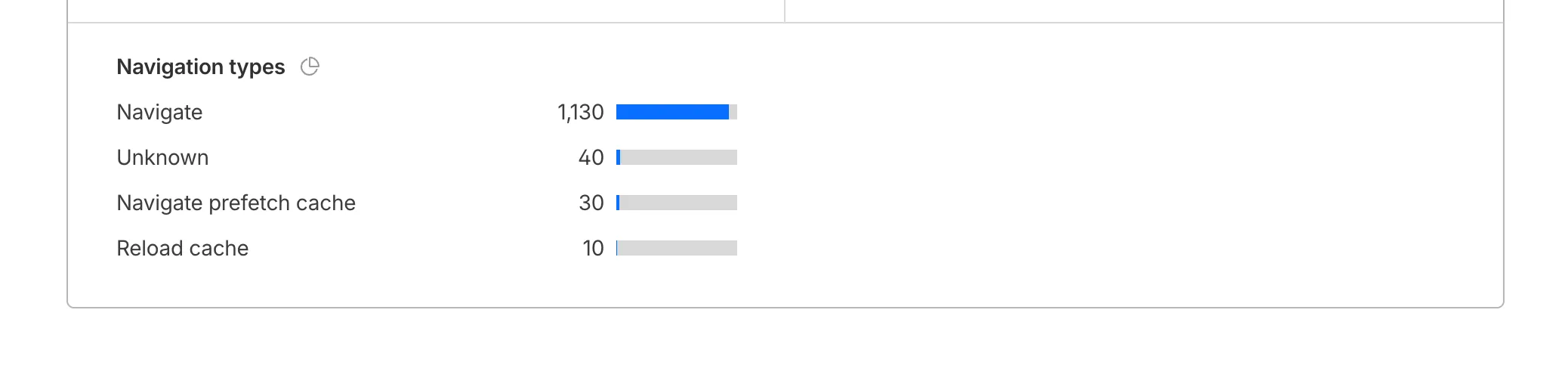

Cloudflare Web Analytics now supports Navigation Type reporting and filtering.

This update allows developers and performance analysts to see how users are navigating between pages — whether through a link click or form submission, a page reload, or using the browser's back/forward buttons — and whether a browser cache hit occurred for these behaviors.

Understanding navigation types is critical for optimizing user experience. For example, if a high volume of your traffic consists of "Back-forward" navigations versus "Back-forward Cache", those visitors are not benefiting from the Back/Forward Cache (bfcache) and therefore are experiencing higher load times due to potentially unnecessary network requests.

The same applies for regular "Navigate" entries — where "Navigate Cache", "Navigate Prefetch Cache" and "Prerender" would provide instant document retrieval — and "Reload", where "Reload cache" would be more optimal.

A high volume of "Reload" entries can also indicate a potential stability problem with your website.

By identifying these patterns, you can tune your browser caching strategies to ensure HTML documents are served instantaneously from local caches rather than requiring a roundtrip to the network.

For more information, refer to Navigation Types.

- Monitor Cache Effectiveness: See how often your site is served from the HTTP cache or bfcache.

- Identify Performance Bottlenecks: Filter by the different types to understand performance opportunity of improving browser cache hit ratio.

You can now find the Navigation Type dimension in the Web Analytics dashboard. You can filter to include/exclude one or more specific types using "equals", "does not equal", "in", or "not in" matchers.

To check the list of popular navigation types, select Page views on the Web Analytics sidebar and scroll down to the bottom:

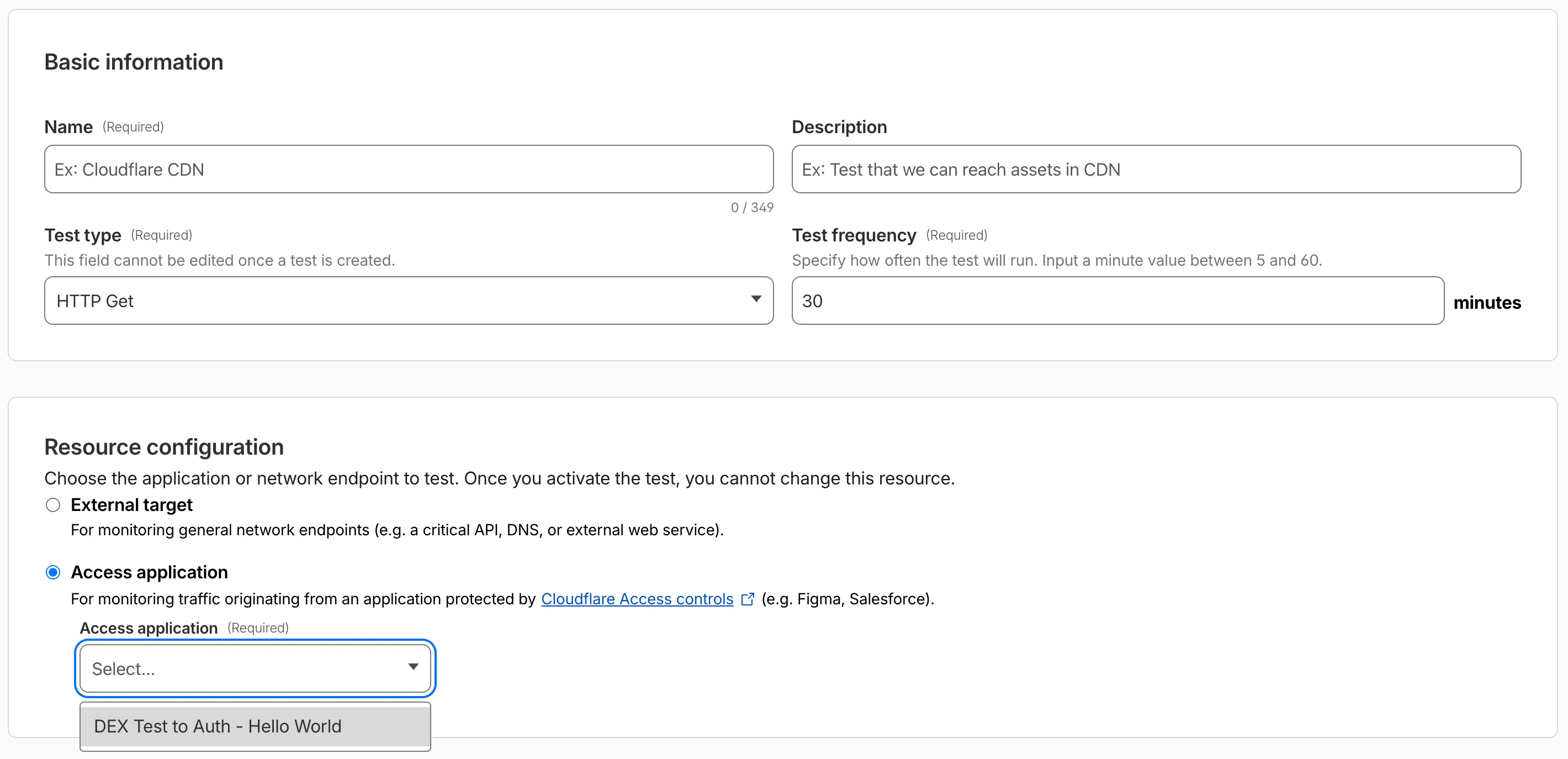

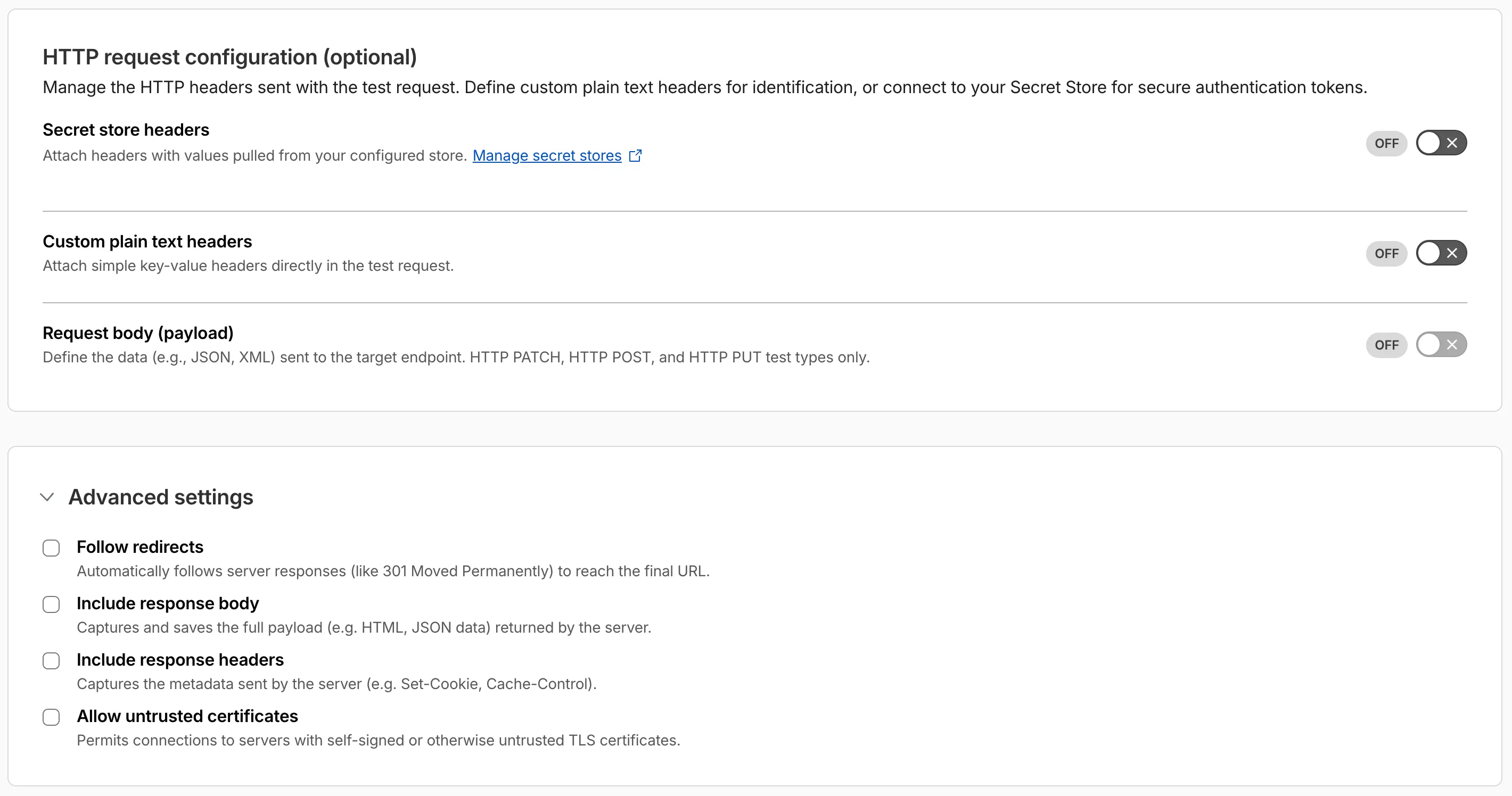

Digital experience tests now support testing applications protected by Cloudflare Access or third-party authentication. All authentication secrets are managed via Cloudflare Secret Store.

Digital experience tests also have enhanced configuration options including:

- New HTTP methods (DELETE, PATCH, POST, PUT)

- Secret Store headers, custom plain text headers, and custom request bodies

- Advanced settings: follow redirects, response bodies, response headers, and allow untrusted certificates

The Gateway Authorization Proxy and hosted PAC files are now generally available for all plan types.

Authorization proxy endpoints add an identity-aware option alongside the existing source IP proxy endpoints, using Cloudflare Access authentication to verify who a user is before applying Gateway filtering — without installing the Cloudflare One Client. Cloudflare-hosted PAC files let you create and distribute PAC files directly from Cloudflare One on Cloudflare's global network.

These features are ideal for environments where deploying a device client is not an option, such as virtual desktops (VDI) or compliance-restricted endpoints.

To get started, refer to the proxy endpoints documentation.

You can now connect Hyperdrive to a private database through a Workers VPC service. This is the recommended way to connect Hyperdrive to a private database that is not exposed to the public Internet.

When creating a Hyperdrive configuration in the Cloudflare dashboard, choose Connect to private database and then Workers VPC. From there, you can select an existing VPC service or create a new one inline by picking a Cloudflare Tunnel and entering your origin host and TCP port.

You can also create a Hyperdrive configuration backed by a Workers VPC service from the command line:

Terminal window npx wrangler hyperdrive create my-vpc-database \--service-id <YOUR_VPC_SERVICE_ID> \--database <DATABASE_NAME> \--user <DATABASE_USER> \--password <DATABASE_PASSWORD> \--scheme postgresqlWorkers VPC services are reusable across Hyperdrive configurations and can also be bound directly to Workers, so you can share the same private connection across multiple products.

To get started, refer to Connect Hyperdrive to a private database using Workers VPC.

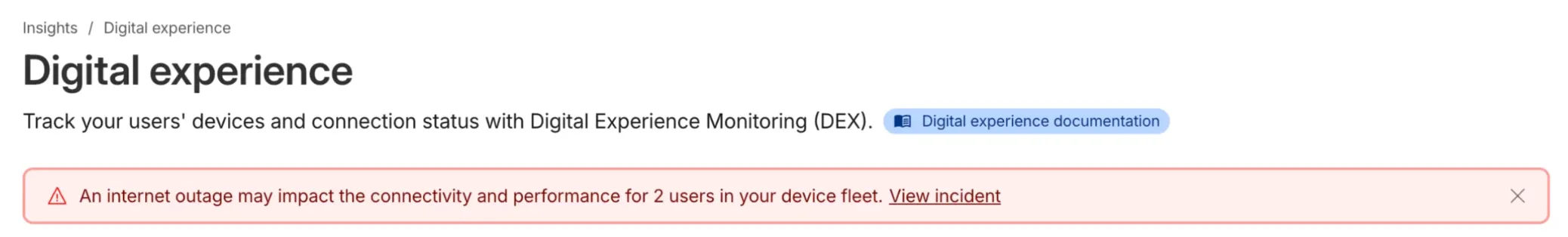

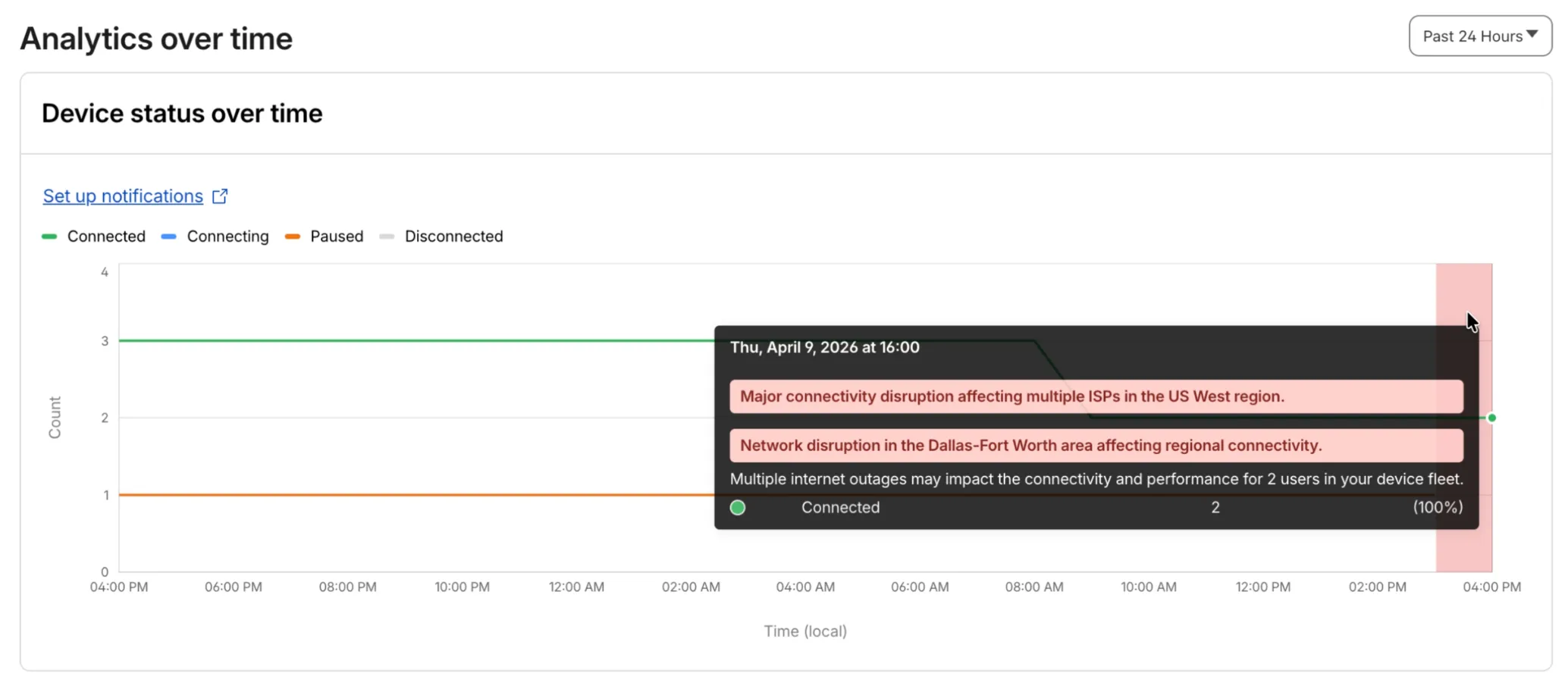

Digital Experience will display a dashboard notification when an Internet outage or traffic anomaly may impact a Cloudflare One Client device based on its geographic location or network connection.

This Internet outage and traffic anomaly data is pulled from Cloudflare Radar ↗. All Internet outage and traffic anomaly observations can be viewed in the Radar Outage Center ↗.

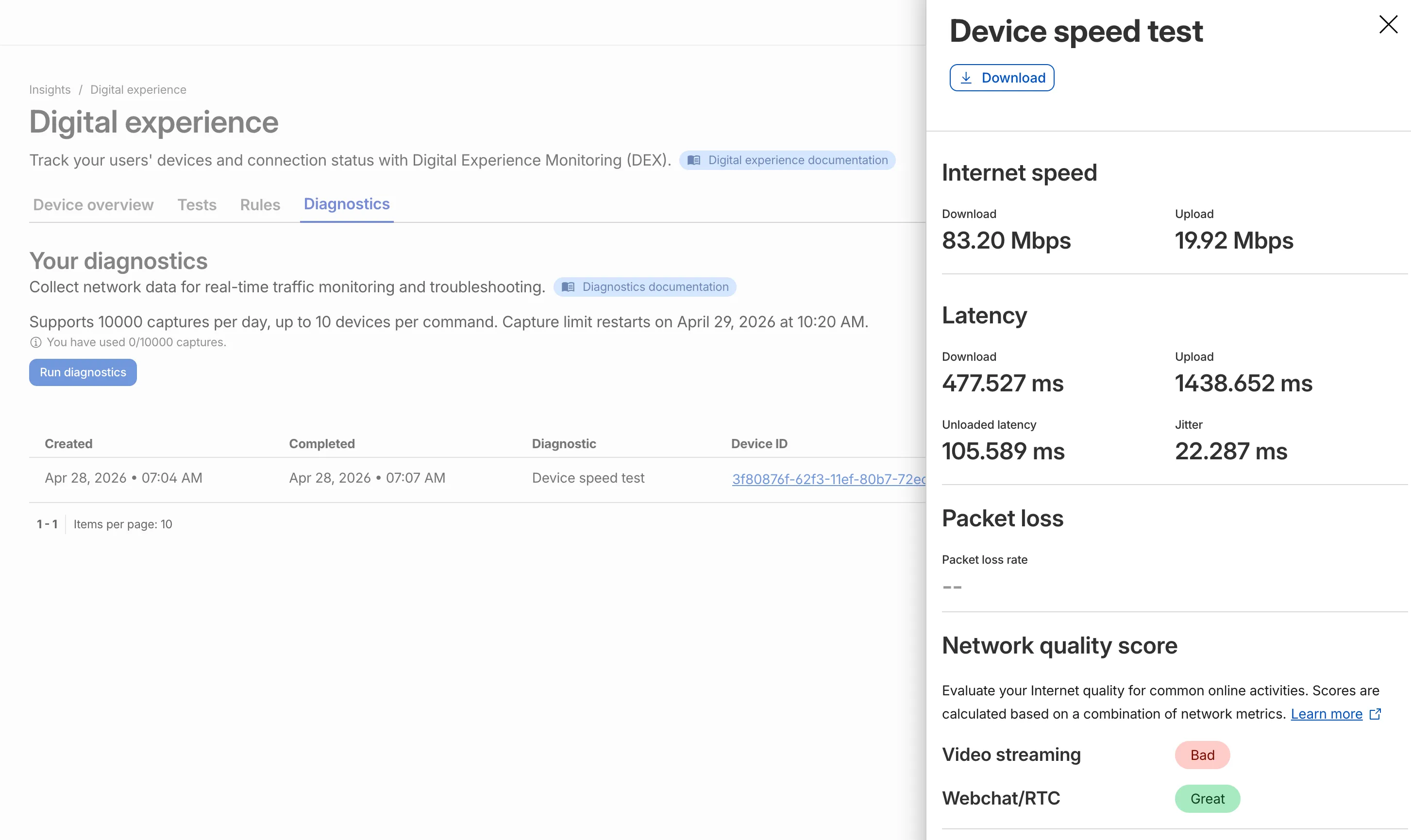

IT teams can now remotely run speed tests from the Cloudflare One Client to Cloudflare's network edge.

Each speed test includes the following metrics:

- Internet speed: download and upload throughput

- Latency: download, upload, unloaded latency, and jitter

- Network quality score: video streaming, webchat/real-time communication (RTC)

In the Cloudflare dashboard ↗, go to Zero Trust > Insights > Digital experience > Diagnostics and select Run diagnostics to use the feature today.

You can now create, view, and manage DLP detection entries outside of profiles.

Detection entries are no longer hidden inside individual profiles. Administrators can manage detection entries directly from the Detection entries section and use them in custom DLP profiles.

For more information, refer to Configure detection entries.

Cloudflare DLP now includes a new predefined profile designed to detect PII records that contain multiple types of personal data: Personally Identifiable Information (PII) Record.

Most predefined and custom DLP profiles match when any enabled detection entry matches. The Personally Identifiable Information (PII) Record profile is different. It only matches when at least three unique detection entries are found in close proximity, which reduces false positives from standalone values that may not represent a real PII record.

Detection entries included in the profile:

- AU Passport Number

- American Express Card Number

- Diners Club Card Number

- US Driver's License Number

- Email Address

- Full Name

- US Mailing Address

- Mastercard Card Number

- US Individual Tax Identification Number (ITIN)

- US Passport Number

- US Phone Number

- Union Pay Card Number

- United States SSN Numeric Detection

- Visa Card Number

For more information, refer to predefined DLP profiles.

You can now disable Cloudflare's reverse proxy across all zones in your account simultaneously using the new

enforce_dns_onlysetting. When enabled, Cloudflare responds to DNS queries for all proxied records with your origin IP addresses instead of Cloudflare's anycast IPs. This account-level kill switch is designed for incident response scenarios where you need to quickly route traffic directly to your origin servers.- Account-level — Affects all zones in the account simultaneously with a single API call.

- Non-destructive — Does not modify your DNS records. Disabling the setting restores normal proxy behavior.

- API-only — Available through the API only, not in the Cloudflare dashboard.

Included: Standard proxied A, AAAA, and CNAME records, Load Balancing records, and records matching Worker routes.

Excluded: Spectrum applications, Cloudflare Tunnel CNAMEs, R2 custom domains, Web3 gateways, and Workers custom domains continue to operate normally.

- Verify your origin servers can handle direct traffic without Cloudflare's caching and filtering.

- Review which origin IPs will become publicly visible through DNS queries.

- Test the API in a staging account before relying on it for incident response.

Available via API to all Cloudflare customers.

For information on how to use it, refer to Enforce DNS-only developer documentation .

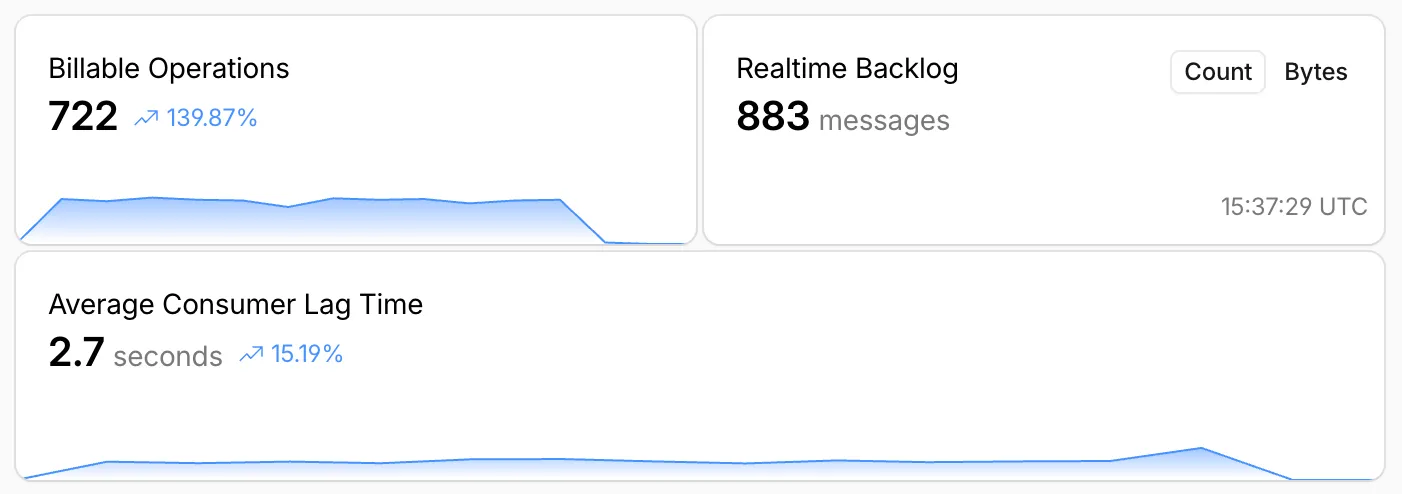

Queues, Cloudflare's managed message queue, now exposes realtime backlog metrics via the dashboard, REST API, and JavaScript API. Three new fields are available:

backlog_count— the number of unacknowledged messages in the queuebacklog_bytes— the total size of those messages in bytesoldest_message_timestamp_ms— the timestamp of the oldest unacknowledged message

The following endpoints also now include a

metadata.metricsobject on the result field after successful message consumption:/accounts/{account_id}/queues/{queue_id}/messages/pull/accounts/{account_id}/queues/{queue_id}/messages/accounts/{account_id}/queues/{queue_id}/messages/batch

Call

env.QUEUE.metrics()to get realtime backlog metrics:TypeScript const {backlogCount, // numberbacklogBytes, // numberoldestMessageTimestamp, // Date | undefined} = await env.QUEUE.metrics();env.QUEUE.send()andenv.QUEUE.sendBatch()also now return a metrics object on the response.You can also query these fields via the GraphQL Analytics API or view realtime backlog on the dashboard ↗.

For more information, refer to Queues metrics.

-

The Support button in the dashboard global navigation header now takes you directly to the Cloudflare Support Portal ↗, eliminating the previous dropdown menu.

This change ensures that when you need help, you spend less time navigating the UI and more time getting the answers you need.

- Previous behavior: Selecting ? Support opened a dropdown menu with various links (Help Center, Cloudflare Community, etc.).

- New behavior: Selecting Support immediately redirects your current tab to the Support Portal.

To learn more about the resources available to you, refer to the Cloudflare Support documentation ↗.

Cache Response Rules now work with Version Management. You can version response-phase cache settings and promote them through environments, just like Cache Rules and other supported configurations.

Previously, Cache Response Rules were excluded from zone versioning. Any response-phase rule you created applied globally across all environments with no way to test changes in staging first. Cache Rules already supported versioning, but the response phase, where you modify

Cache-Controldirectives, manage cache tags, and strip headers, did not.Cache Response Rules are now fully integrated with Version Management. You can create or modify response-phase rules within a version, and those changes stay scoped to that version until promoted.

- Safe rollout of cache behavior changes: Test response-phase rules in a staging environment before promoting to production. Catch unintended caching side effects early.

- Parity with Cache Rules: Cache Response Rules now follow the same versioning workflow as Cache Rules, so you can manage all cache configuration through a single promotion pipeline.

- Independent environment control: Run different response-phase cache settings per environment. For example, strip

Set-Cookieheaders in staging to validate cacheability without affecting production traffic.

Configure Cache Response Rules in the Cloudflare dashboard ↗ under Caching > Cache Rules, or via the Rulesets API. For more details, refer to the Cache Response Rules documentation and the Version Management documentation.

Cloudflare-generated 5xx error responses now return structured JSON and Markdown when agents request them, matching the format already available for 1xxx errors. Responses follow RFC 9457 (Problem Details for HTTP APIs) ↗ and include a

Retry-AfterHTTP header on retryable codes.5xx coverage. Ten Cloudflare-generated error codes (500, 502, 504, 520-526) now serve structured responses. These are errors Cloudflare itself generates when it cannot reach or understand the origin server. Origin-generated 5xx responses that Cloudflare passes through are not affected.

Fault attribution. The

error_categoryfield tells agents where the fault lies:origin(502, 504, 520-524) — the origin server is responsible. Transient; retry with the backoff inretry_after.cloudflare(500) — Cloudflare's fault, not the website or the request. Short retry.ssl(525, 526) — the origin's TLS configuration is broken. Do not retry.

Retry-After header. Retryable codes (500, 502, 504, 520-524) include a

Retry-AfterHTTP header matching theretry_afterbody field. Non-retryable codes (525, 526) do not include the header.Request header sent Response format Accept: application/jsonJSON ( application/jsoncontent type)Accept: application/problem+jsonJSON ( application/problem+jsoncontent type)Accept: application/json, text/markdown;q=0.9JSON Accept: text/markdownMarkdown Accept: text/markdown, application/jsonMarkdown (equal q, first-listed wins)Accept: */*HTML (default) Available now for all zones on all plans.

Get JSON response for error 522:

Terminal window curl -s --compressed -H "Accept: application/json" -A "TestAgent/1.0" -H "Accept-Encoding: gzip, deflate" "<YOUR_DOMAIN>/cdn-cgi/error/522" | jq .Check presence of the

Retry-AfterHTTP header associated with the JSON response for error 521:Terminal window curl -s --compressed -D - -o /dev/null -H "Accept: application/json" -A "TestAgent/1.0" -H "Accept-Encoding: gzip, deflate" "<YOUR_DOMAIN>/cdn-cgi/error/521" | grep -i retry-afterReferences: